Language is deeply contextual. The word "bank" means different things in “I went to the bank” versus “I sat by the river bank”. The word "lead" changes pronunciation between “lead the team” and “lead pipes”. Even simple pronouns like "it" or "they" depend entirely on earlier context to make sense.

For AI to process language well, it must move beyond individual words and consider the surrounding text, the broader conversation, and even background knowledge. This ability to track context is one of the biggest reasons modern language models are far more capable than earlier systems—and why attention mechanisms became such a breakthrough in natural language processing.

Why Context Changes Everything

Context can completely reshape meaning.

- “The man saw the dog with the telescope” could mean either the man had the telescope or the dog did.

- “Time flies like an arrow” could be poetic—or absurd instructions to insects.

Humans resolve these ambiguities effortlessly, but early AI systems that treated words in isolation often failed in amusing ways. Types of context that shape meaning:

- Local context: nearby words that guide grammar and immediate sense.

- Document context: overall topic or theme of a text.

- Conversation context: tracking who or what pronouns refer to.

- World context: background knowledge, culture, and shared assumptions.

By incorporating context at multiple levels, modern AI systems move beyond isolated words to capture the richer, nuanced meanings that humans naturally understand.

The Challenge of Word Order

Context is not the only important property of language. Language is also sequential: “Dog bites man” is not the same as “Man bites dog”.

Early “bag-of-words” models ignored word order entirely, treating both sentences as the same. This simplified processing but erased crucial meaning. Neural models needed to learn that order carries information about grammar, time, and causality.

- Grammar and syntax: “The quick brown fox” makes sense, while “quick the brown fox” violates English word order.

- Temporal and causal cues: “I woke up and had breakfast” implies a sequence; reversing it doesn’t.

- Compositional meaning: “Green house” (a house painted green) differs from “greenhouse” (a glass building for plants).

Recognizing and modeling word order allows neural networks to capture the structure and logic of language, turning raw sequences into meaningful communication.

Context Windows: How Much History Matters

Language unfolds over time, and AI models use context windows to decide how much previous text to consider.

- Fixed windows: Early models looked back 50–200 words—better than n-grams, but still limited.

- Sliding windows: As text grows, the window moves forward, dropping old words while adding new ones.

- Modern expansions: Today’s models can handle thousands—or even 100,000+ words, maintaining coherence across essays, stories, or long conversations.

Still, if important details fall outside the window, the model may “forget,” leading to inconsistencies. Nowadays models have thousends of tokens in their context window.

The Attention Revolution

The real leap came with attention mechanisms. You may remember from Chapter 4 that attention allows a model to weigh different words in the input rather than treating them all equally.

This turns out to be exactly what’s needed for handling context: instead of compressing past words into a single fixed summary, the model can dynamically focus on the most relevant pieces of information when predicting the next word.

- In “The cat that was sleeping in the sun finally…”, the model should attend most strongly to "cat" and "sleeping" when predicting the next word, not to filler words like "the" or "in".

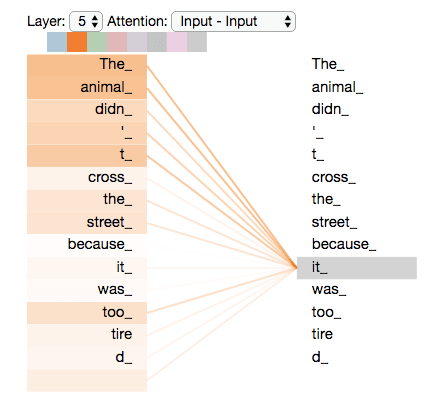

- In “The animal didn’t cross the street because it was too tired”, attention enables the model to link "it" back to "animal" rather than mistakenly to "street".

This dynamic weighting makes predictions more accurate, especially for resolving ambiguity or long-range references. Unlike fixed-window methods, attention highlights the words most relevant for the current decision.

It also works at multiple scales: some heads focus on nearby grammar, while others connect distant phrases, letting the model capture both local structure and long-range dependencies.

By combining these techniques, attention transforms context handling from a blunt memory span into a targeted spotlight, letting modern models interpret sentences with much more nuance than previous methods.

Multi-Head Attention: Parallel Contexts

Modern models go further with multi-head attention. Each “head” focuses on a different relationship in parallel:

- Syntactic: subjects with verbs, adjectives with nouns.

- Semantic: related concepts.

- Coreference: pronouns to the right nouns.

- Long-range links: coherence across paragraphs.

- Position: effects of word order.

For example, in “The red car that I bought yesterday is parked outside”, one head might track that "car" is the subject, another that "red" describes it, another that "yesterday" indicates time. Together, these perspectives create a rich, layered understanding.

This ability to process multiple kinds of context simultaneously is why Transformer-based models became so dominant.

Context Across Different Scales

Context isn’t just about immediate neighbors—it works at many levels:

- Word-level: “The quick brown” suggests a noun comes next.

- Sentence-level: “The dog chased the cat” tells us who did what.

- Discourse-level: Later references like “he”*, “the commander in chief”, or “the administration” all connect back to "the president".

- Document-level: “Cells” means something different in biology versus prisons.

- Conversation-level: In dialogue, meaning depends on shared knowledge, goals, and turn-taking.

Modern models aim to juggle all these scales at once.

The Limits of Context Understanding

Even advanced models still face challenges:

- Length limits: Context windows are large but not infinite; older details can be lost.

- Implicit knowledge: Idioms like “raining cats and dogs” or cultural references often trip up models.

- Dynamic revision: Humans easily reinterpret earlier text when new clues appear; AI struggles to update its understanding.

- Beyond text: Real human communication uses tone, gesture, and environment—clues text-only models miss.

These gaps show why AI context handling is powerful but still not the same as human comprehension.

Context in Modern Language Models

Modern systems tackle context in several ways:

- Contextual word representations: Unlike static embeddings, words now change representation based on context ("bank" in finance ≠ "bank" by a river).

- Context-aware generation: Outputs depend not just on the immediate input but the broader conversation.

- In-context learning: Models can adapt on the fly, using examples given within a conversation to apply new rules or definitions.

- Emergent reasoning: By tracking context carefully, models can solve multi-step problems and maintain logical consistency.

These abilities explain why language models feel far more intelligent than older systems built on word counts or simple n-grams.

Final Takeaways

Context is what turns strings of words into real meaning. The development of attention and expanded context windows allowed AI systems to capture syntax, semantics, and discourse in ways earlier models never could.

Modern models can track multiple layers of context simultaneously, making them effective conversational partners and problem solvers. But they still struggle with very long conversations, cultural background knowledge, and truly dynamic interpretation—areas where human understanding still has the edge.