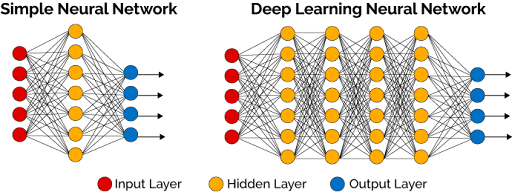

We've seen how multi-layer perceptrons can solve problems that single perceptrons cannot. But the networks powering today's AI breakthroughs—systems that recognize faces, translate languages, and generate art—go much deeper. These "deep neural networks" use the same basic principles as MLPs but scale them up dramatically.

The difference between a basic MLP and a deep neural network isn't fundamentally new technology—it's about scale, depth, and the emergent capabilities that arise when you stack many layers together. Understanding this scaling process helps explain why modern AI has become so powerful and why the term "Deep Learning" captures the essence of today's AI revolution.

What Makes a Neural Network "Deep"?

The transition from MLPs to deep neural networks is primarily about adding more hidden layers, but this simple change creates profound differences in capability. Let's find some definition for "Deep" first:

- Shallow networks: 1-2 hidden layers (traditional MLPs).

- Deep networks: 3+ hidden layers (modern DL).

- Very deep networks: 50-1000+ layers (cutting-edge research).

While a 3-layer MLP and a 10-layer deep network use the same basic components (perceptrons, weights, activation functions), the deep network can learn exponentially more complex patterns. It's like the difference between a simple tool and a sophisticated machine—same basic principles, vastly different capabilities.

This depth is what allows modern neural networks to capture intricate structures in data and power breakthroughs across vision, language, and many other domains.

Hierarchical Feature Learning: The Deep Advantage

Deep networks excel at automatically discovering hierarchical representations—learning to recognize complex patterns by building them up from simpler components.

🐱 Image Recognition Hierarchy Example: Let's trace how a deep network processes a photograph of a cat:

Layer 1 (Low-level features) detects basic visual elements:

- Horizontal lines, vertical lines, diagonal edges.

- Color gradients and brightness changes.

- Simple geometric shapes.

Layer 2-3 (Mid-level features) combines basic elements into meaningful parts:

- Curves and corners by combining line segments.

- Textures like fur or smooth surfaces.

- Simple shapes like circles and triangles.

Layer 4-5 (High-level features) recognizes complex object parts:

- Eyes (combining curves, textures, and color patterns).

- Ears (triangular shapes with specific textures).

- Facial features and body parts.

Layer 6+ (Object recognition) assembles parts into complete concepts:

- "This combination of features represents a cat".

- "The cat is sitting" or "the cat is orange".

In this way, deep networks transform raw input into layered abstractions, enabling them to recognize and reason about complex patterns with remarkable accuracy.

Why Depth Matters

From a mathematical perspective, adding layers provides exponential increases in the types of functions the network can represent. Functions can become more complex:

- 1 layer: Can only create linear decision boundaries (like our original perceptron).

- 2 layers: Can approximate any continuous function (universal approximation).

- 3+ layers: Can represent the same functions with fewer neurons, plus capture hierarchical structure more naturally.

✍🏼 Handwritting Digits Example: Consider recognizing handwritten digits again. A shallow network might need thousands of neurons to directly map pixel patterns to digits. A deep network can use fewer total neurons by building up understanding:

- Layer 1: 100 neurons detecting stroke patterns.

- Layer 2: 50 neurons combining strokes into digit parts.

- Layer 3: 20 neurons recognizing complete digits.

This hierarchical approach is both more efficient and more interpretable.

Training Challenges: The Price of Depth

Adding layers creates new challenges that don't exist in shallow networks.

The Vanishing Gradient Problem: As we'll see in the following chapters, deep networks learn through backpropagation—sending error signals backward through layers. In very deep networks, these signals can become extremely small by the time they reach early layers, making learning slow or impossible.

Computational Requirements: State of the art models require enormous computer power:

- Memory: Deep networks require significant RAM to store all layer activations

- Processing: More layers mean more calculations for each prediction

- Training time: Deep networks can take days or weeks to train properly

Overfitting Risk: With millions or billions of parameters, deep networks can easily memorize training data instead of learning generalizable patterns. This requires careful regularization and validation strategies.

Hardware Evolution: The deep learning revolution was enabled by advances in computing hardware like GPUs and techniques like using multiple machines to train very large models

The Deep Learning Revolution

The shift from shallow to deep networks represents one of the most significant advances in AI history:

Before deep learning (1990s-2000s):

- Hand-crafted features for each problem domain.

- Shallow networks with limited capability.

- Performance plateaued on complex tasks.

Deep learning Era (2010s-present):

- Automatic feature learning from raw data.

- Breakthrough performance on vision, language, and games.

- Continuous improvement with more data and compute.

The most important enablers of the revolution:

- Better algorithms: Improved training techniques and architectures.

- More data: Internet-scale datasets for training.

- Computational power: GPUs and specialized hardware.

- Software frameworks: Tools that make deep learning accessible.

Together, these factors sparked the deep learning revolution, reshaping AI into a field capable of solving problems once thought far beyond reach.

Final Takeaways

Deep neural networks extend the multi-layer perceptron concept by adding many more hidden layers, enabling automatic discovery of hierarchical feature representations. This depth allows networks to break complex problems into manageable sub-problems, learning to recognize patterns at multiple levels of abstraction. While the basic components remain the same—neurons, weights, and activation functions—the emergent capabilities of deep networks far exceed those of shallow architectures.

However, this power comes with increased computational requirements and training challenges that require sophisticated techniques to overcome. Understanding the principles of deep networks provides insight into why modern AI systems are so capable and how they differ from earlier, simpler approaches to machine learning.