Every reinforcement learning agent faces the classic exploration vs. exploitation trade-off: stick with what works, or try something new that might be better? This balance drives how agents learn and adapt.

The dilemma is universal—restaurants balancing popular dishes with new recipes, students choosing between familiar and new courses, or streaming platforms mixing safe recommendations with fresh suggestions.

In reinforcement learning, too much exploitation means missing better opportunities, while too much exploration wastes effort on unproven actions. Striking the right balance is key to intelligent decision-making.

The Core Dilemma

The exploration-exploitation trade-off emerges because agents have limited time and opportunities to learn. Every action serves one of two purposes:

- Exploitation: Using current knowledge to choose actions that are expected to perform well based on past experience.

- Exploration: Trying actions that might provide new information, potentially discovering better strategies even if they might perform poorly in the short term.

The mathematical tension can be expressed simply:

Pure exploitation maximizes immediate performance but may miss opportunities for long-term improvement. Pure exploration gathers maximum information but sacrifices performance while learning. The optimal strategy balances both considerations.

The challenge is that this balance depends on the situation—how much is already known, how much time remains for learning, and how costly mistakes are during the learning process.

The Multi-Armed Bandit Problem

The exploration–exploitation trade-off is often illustrated by the multi-armed bandit problem, named after slot machines. Imagine a row of machines, each with an unknown payout rate. With limited pulls, you must decide between exploiting the machine that seems best so far or exploring others that might pay out more.

The setup:

- Each machine has a fixed but unknown probability of paying out.

- You can only discover payout rates by trying machines.

- Every pull dedicated to learning is a pull not spent on the best machine.

- You must balance learning which machines are best with using that knowledge.

Pure strategies fail:

- Pure exploration: Try each machine equally to learn their rates, but never focus on the best ones.

- Pure exploitation: After initial tries, always use the machine that seemed best, potentially missing superior alternatives.

The multi-armed bandit illustrates why naive approaches don't work and why sophisticated exploration strategies are needed.

Epsilon-Greedy Strategy

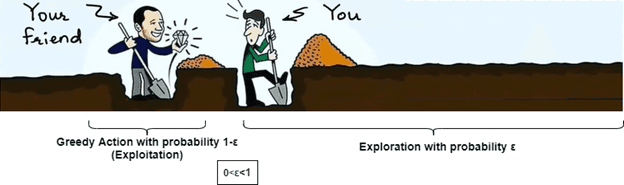

One of the simplest exploration strategies is epsilon-greedy, which makes the trade-off explicit through a single parameter.

The algorithm:

- With probability ε (epsilon), choose a random action (exploration)

- With probability 1-ε, choose the best-known action (exploitation)

This equation shows how a single parameter ε directly controls the balance between exploration and exploitation.

Simple to implement and understand, guarantees some exploration, works well in many situations. However, random exploration is inefficient—it might repeatedly try actions already known to be poor instead of focusing exploration on promising unknowns.

Thompson Sampling

Thompson sampling takes a probabilistic approach to exploration by maintaining beliefs about action values and sampling actions according to the probability that they're optimal.

The approach:

- Maintain probability distributions over the value of each action

- Sample a value for each action from its distribution

- Choose the action with the highest sampled value

- Update the distribution for the chosen action based on observed reward

Intuition: Actions that are likely to be optimal get selected more often, but there's always some chance of trying other actions if they might be better. As evidence accumulates, probability distributions become more concentrated around true values.

Advantages: Naturally balances exploration and exploitation, handles uncertainty in a principled way, often performs very well in practice, adapts to the structure of the problem.

Thompson sampling represents the current best practice for many bandit problems and extends well to more complex scenarios.

Exploration in Deep Reinforcement Learning

When agents operate in complex environments with neural network function approximation, exploration becomes more sophisticated but also more challenging.

Challenges in deep RL:

- High-dimensional state spaces make random exploration inefficient

- Neural networks can be overconfident about poorly-explored regions

- Credit assignment across long sequences of actions complicates learning

Advanced exploration strategies:

Curiosity-driven exploration: Agents receive intrinsic rewards for visiting states that are surprising or hard to predict:

Count-based exploration: Track how often different states have been visited and explore less-visited areas more:

Information-theoretic exploration: Seek actions that maximize information gain about the environment or reduce uncertainty about optimal policies.

These approaches help agents explore systematically in complex environments rather than wandering randomly.

The Explore-Exploit Lifecycle

The balance between exploration and exploitation typically shifts over time as agents accumulate knowledge and experience.

Early learning phase:

- High exploration: Try many different actions to understand the environment

- Accept poor performance while gathering information

- Focus on breadth of experience over optimization

Knowledge building phase:

- Balanced exploration-exploitation: Continue learning while improving performance

- Direct exploration toward promising but uncertain areas

- Begin specializing based on discovered patterns

Mature performance phase:

- Low exploration: Focus on exploiting well-understood strategies

- Occasional exploration to detect environmental changes

- Prioritize consistent high performance

Adaptation phase:

- Increased exploration when performance degrades or environment changes

- Rapid rebalancing based on new conditions

- Return to learning mode when existing knowledge becomes outdated

This lifecycle mirrors how expertise develops in many domains—broad initial learning followed by specialization and ongoing adaptation.

Real-World Applications and Considerations

The exploration-exploitation trade-off appears throughout practical AI applications:

🗣️ Recommendation systems: Balance showing users content they're likely to enjoy with introducing them to new possibilities that might increase long-term engagement.

🚗 Autonomous vehicles: Balance following well-tested routes with exploring potentially better paths, while maintaining safety constraints.

💊 Drug discovery: Balance testing modifications of known effective compounds with exploring entirely new chemical structures.

📈 Online marketing: Balance using proven ad campaigns with testing new creative approaches or targeting strategies.

Final Takeaways

The exploration vs exploitation dilemma represents one of the most fundamental challenges in reinforcement learning and intelligent decision-making more broadly. Effective agents must balance the competing demands of performing well with current knowledge while continuing to learn and adapt.

Understanding this trade-off explains many behaviors we see in both AI systems and human decision-making. The most successful approaches don't eliminate the tension but manage it intelligently—exploring when uncertainty is high and information is valuable, exploiting when confidence is strong and performance matters most.

As AI systems become more sophisticated and are deployed in increasingly complex environments, managing exploration and exploitation becomes even more critical for ensuring both learning progress and reliable performance in real-world applications.